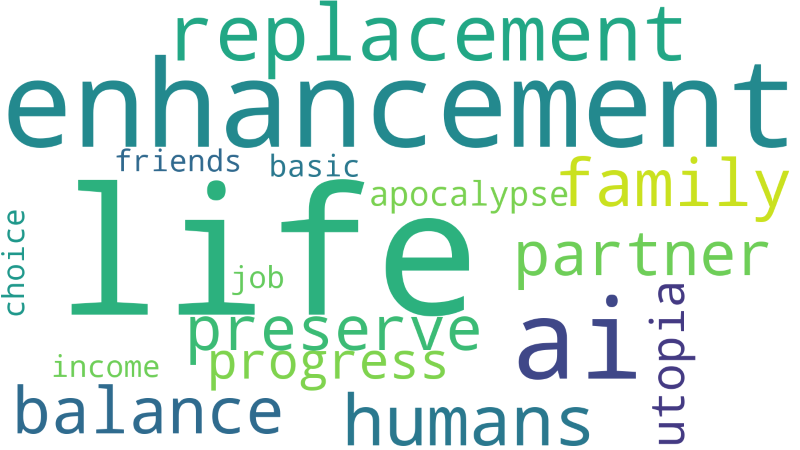

Life Enhancement or Life Replacement: The 3P Framework Puts You in Control

You don't have to choose between AI utopia and apocalypse. The 3P Framework gives you a third option to shape your own AI future.

This article was originally published on Medium

It’s 11 PM, and Sarah is doom-scrolling again. One headline screams that AI will cure cancer and end poverty within five years. The next warns that ChatGPT will eliminate 300 million jobs by 2030. A tech CEO promises universal basic income and a post-work paradise. A prominent researcher predicts Superintelligent AI will destroy humanity.

Sarah closes her laptop, exhausted and anxious. She’s a teacher, a mother, a human being trying to navigate her actual life. But the AI conversation seems to happen somewhere above her head, between billionaires and doomsayers, as if she’s merely a spectator waiting to see whether the future will be heaven or hell.

Here’s what Sarah doesn’t realize yet: She’s not a spectator. She has more control than she thinks.

While experts debate extremes, 8 billion humans like Sarah are living in the messy, complicated middle — and that’s where the real decisions get made. Not in boardrooms or research labs, but in daily choices about how we work, create, connect, and live.

The question isn’t whether AI will enhance or replace human life. The question is: Will you actively choose enhancement, or passively accept replacement?

That choice requires a framework. Not another prediction about the future, but a practical tool for navigating it. That’s what the 3P Framework provides: a way to take control of your AI future, starting today.

Separating Dreams from Reality

Let’s start by being honest about what AI actually can and cannot do.

The Paradise We’re Promised

The utopian narrative sells us a seductive vision:

- Universal Basic Income will solve all economic inequality once AI generates unlimited wealth

- AI will eliminate disease, from cancer to aging itself, making humans effectively immortal

These aren’t completely baseless. AI has made remarkable progress in drug discovery, protein folding, and pattern recognition. Some form of economic restructuring will likely become necessary as automation advances.

But here’s the reality check:

AI excels at pattern recognition, not wisdom. It can identify correlations in medical data, but it can’t hold a grieving family’s hand or help a patient navigate impossible treatment decisions. Even if AI perfectly identifies every disease, human healing involves touch, presence, and emotional support that no algorithm can provide.

Creativity requires more than generation. AI can produce images, text, and music at scale. But creation without intention, context, or lived experience is just sophisticated mimicry. Humans don’t just make art — we make meaning.

The paradise myth makes the same fundamental error: assuming that technological capability automatically translates to human flourishing. It doesn’t. Paradise requires human choices about how to deploy AI, not just AI advancement itself.

The Apocalypse We’re Warned About

The dystopian camp warns us:

- Superintelligent AI will emerge suddenly and take over

- All jobs will disappear overnight, leaving billions unemployed

- Humans will become obsolete, outcompeted by superior AI intelligence

Again, these fears aren’t entirely unfounded. AI systems can behave unpredictably. Automation does displace workers. Power concentrates around whoever controls the most powerful technology.

But let’s get perspective:

Today’s AI is sophisticated pattern matching, not sentient intelligence. ChatGPT doesn’t “understand” language the way humans do. It predicts likely word sequences based on training data. Image generators don’t “see” the way we do.

Jobs evolve; they rarely disappear wholesale. History shows that automation eliminates specific tasks, not entire occupations. New roles emerge. The transition can be painful, but historical precedent suggests transformation, not elimination.

Human obsolescence confuses capability with value. Just because AI can perform certain cognitive tasks doesn’t make humans worthless. A calculator can do arithmetic faster than any human — this didn’t make mathematicians obsolete. Human value isn’t reducible to task performance. We matter because we’re conscious beings with dignity, relationships, and subjective experience.

The real risks are human, not robotic. The genuinely scary scenarios don’t involve AI spontaneously becoming evil. They involve humans using AI for surveillance, manipulation, autonomous weapons, or economic exploitation. The danger isn’t AI rebellion — it’s human carelessness, greed, and malice amplified by powerful tools.

Here’s the key distinction: The danger isn’t AI becoming too smart. It’s humans being too careless.

Most apocalyptic fears are misplaced. The real challenges are about governance, ethics, distribution of benefits, and ensuring AI systems remain accountable to human values. These are difficult problems, but they’re fundamentally human problems requiring human solutions.

Life Enhancement: The Boring, Beautiful Truth

So if the future isn’t paradise or apocalypse, what is it?

Hopefully, it’s something less dramatic and more interesting: ongoing human-AI collaboration that enhances human capabilities without replacing human agency, judgment, or meaning-making.

This is already happening right now, in ways that don’t make headlines but quietly improve actual human lives

The principle is simple: AI should augment human capabilities, not replace human agency.

The 3P: A Framework for the Enhancement Era

So how do we navigate this? How do we decide what to automate, what to augment, and what to keep purely human?

I propose a simple framework: The Three Ps — Preserve, Partner, Progress.

Think of these as three categories for any human activity or domain:

PRESERVE: What Must Remain Human

These are domains where AI can’t or shouldn’t replace humans — where the human element is the entire point, or where automation would diminish something essential about the activity.

Examples:

- Ethical deliberation and moral judgment — Deciding what’s right requires lived experience, values, and the weight of consequences that only conscious beings can bear

- Embodied empathy and emotional care — Comforting a grieving friend, sitting with someone in pain, the physical touch that communicates “you’re not alone”

- Physical activities done for their own sake — Playing basketball for joy, not optimization; hiking without tracking metrics; dancing because it feels good

The test: “Does automation diminish human dignity or connection?”

If automating something makes the activity lose its meaning or harms human relationships, it belongs in the Preserve category. Not because AI couldn’t theoretically do it, but because we shouldn’t want it to.

PARTNER: Where Human + AI Exceeds Either Alone

These are domains where collaboration produces superior outcomes to either humans or AI working independently.

Examples:

- Medical diagnosis — AI analyzes imaging and data, doctors apply clinical judgment and patient knowledge

- Scientific research — AI processes massive datasets, humans form hypotheses and design experiments

- Creative ideation — AI generates options and variations, humans curate and refine

The test: “Does AI enhance human capability without replacing human agency?”

If the combination of human judgment and AI capability creates better outcomes than either alone, and if the human remains the decision-maker, it belongs in Partner. This is where most of AI’s practical value lies.

PROGRESS: What We Should Intentionally Advance

These are domains where we should actively develop AI to take over completely — tasks that are dangerous, degrading, or simply waste human potential.

Examples:

- Dangerous work — Deep mining, hazardous waste handling, disaster zone assessment

- Repetitive drudgery — Data entry, spam filtering, document formatting

- Computational tasks — Mathematical calculations, database queries, system monitoring

The test: “Does automation free humans for more meaningful work?”

If a task is dangerous, mind-numbing, or wastes human potential, we should actively work to automate it. The goal isn’t to preserve jobs for their own sake, but to free humans from work that diminishes us.

The 3P framework puts humans back in the driver’s seat. We’re not passive recipients of whatever AI capabilities emerge. We’re active decision-makers choosing what kind of future to build.

What Humans Must Do: The Dual Cultivation Strategy

But understanding the framework is only half the battle. The harder part is actually living it — especially when every app, platform, and service is designed to increase your engagement and dependency on AI systems.

Here’s the central challenge: As we develop AI systems, we must simultaneously cultivate our distinctly human capabilities.

Think of it as two parallel tracks that must advance together:

Track 1: Proper AI Systems Development

We need to build AI with human values at the center. This means demanding four key principles in every AI system:

- Transparency: Can we explain how it works and why it made a specific decision?

- Accountability: Who’s responsible when it fails or causes harm?

- Reversibility: Can humans override AI decisions?

- Alignment: Does it serve human flourishing, not just efficiency or profit?

These aren’t just principles for AI developers. They’re questions every user, buyer, and citizen should ask about AI systems we interact with. When your employer implements AI hiring tools, ask about transparency and accountability. When your city considers AI policing, demand reversibility and alignment with civil rights.

Track 2: Cultivating Physical Activities & Human-Only Behaviors

Here’s what almost nobody talks about: As AI takes over more cognitive tasks, humans need to intentionally cultivate our physical, social, and emotional capabilities.

AI is inherently non-physical. It processes information at digital speeds. As AI excels at cognitive tasks, there’s a real risk that humans become increasingly sedentary, isolated, and disembodied — living more and more of our lives in our heads or through screens.

The counterbalance must be intentional: We need to reclaim and celebrate our physical existence.

This means:

Physical movement and manual skills — Exercise for how it feels, not what the fitness tracker says. Play sports for joy, not performance data. Dance, hike, swim, build things with your hands. Cook, garden, work with wood or clay — activities where the process matters as much as the product, where you learn through physical trial and error.

Face-to-face social connection — Shared meals without devices. Conversations without the ability to look everything up instantly. Community gatherings, group sports, collaborative projects that require physical presence and real-time coordination.

Unstructured time and boredom — Resisting the urge to fill every gap with AI entertainment or optimization. Letting your mind wander. Daydreaming. Sitting with discomfort. Creating space for thoughts to emerge naturally rather than being fed to you by algorithms.

The future isn’t human OR AI. It’s intentionally human AND strategically AI.

The sweet spot is integration: Let AI handle what it does best, while we focus on what makes us most human.

The Real Choice

The choice isn’t paradise versus apocalypse. It’s not even about choosing between optimism and pessimism about AI.

The actual choice is this: Will we use AI as a tool for human flourishing, or will we allow it to define what humans are for?

Paradise and apocalypse are both stories where humans are passive — either saved by AI or destroyed by it. Enhancement is a story where humans are active agents, making deliberate choices about what to automate, what to augment, and what to preserve as uniquely human.

The AI era isn’t something happening TO humans. It’s something we’re creating FOR humans.

But that only happens if we insist on it. If we demand transparency and accountability. If we use the 3P framework to make intentional decisions rather than accepting whatever AI capabilities emerge. If we cultivate our physical, social, and emotional lives as deliberately as we develop AI systems. That’s the real choice.